Lots of AP Stat problems, both multiple choice and free response, make it clear what inference procedure they expect you to use for a problem, be it a hypothesis test, confidence interval, etc. But there is a brand of AP Free Response problem that claims to be agnostic. An excellent example is 2012 #4, copied and pasted below.

A survey organization conducted telephone interviews in December 2008 in which 1,009 randomly selected adults in the United States responded to the following question.

At the present time, do you think television commercials are an effective way to promote a new product?

Of the 1,009 adults surveyed, 676 responded “yes.” In December 2007, 622 of 1,020 randomly selected adults in the United States had responded “yes” to the same question. Do the data provide convincing evidence that the proportion of adults in the United States who would respond “yes” to the question changed from December 2007 to December 2008?

This question SHOULD be pretty easy to answer using a confidence interval or a hypothesis test. But I’m not sure that answering it with a confidence interval is a solid way to earn full credit on AP. Below, my attempt, following the Identify, Check, Calculate, Conclude procedure that we use in CPM but is really just the AP rubric procedure with bold font. (Note that I wouldn’t expect students to do it quite this way – my loquaciousness, especially when a keyboard is under my fingers, can be reduced significantly in a timed situation). Note: some of the equations below may not appear in a feed reader.

Identify: I will use a 95% confidence interval to evaluate the reasonability of the claim “p2 – p1 ≠ 0″ where p2 will be the proportion of US adults who would respond yes in 12/2008 and p1 is the proportion of US adults who would have responded yes in 12/2007.”

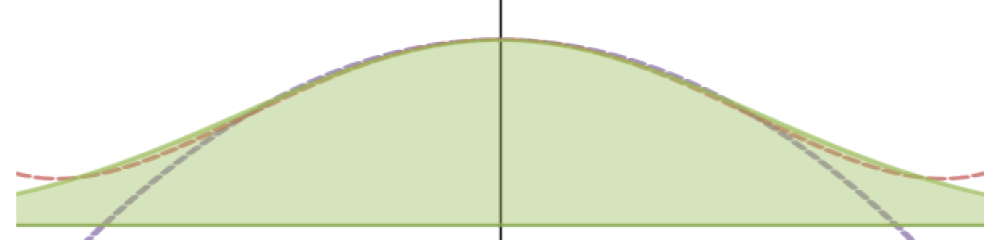

Check: The problem says adults were randomly selected, so bias is minimal or none. The population of U.S. citizens is much, much bigger than 10 * 1009 or 10 * 1020, so individual participants are essentially independent and the standard error formula for the sampling distribution should be accurate. The successes and failures for the trials are 676, 333, 622, and 398, all much greater than 10, so the shape of the sampling distribution should be approximately normal.

Calculate:

z* = 1.96

95% Confidence interval for p2-p1 = 0.060 ± 1.96 ( 0.0213) = 0.060 ± 0.041, or the interval from 0.019 to 0.101

Conclude

We can be 95% confident that the true value of p2 – p1, the change from 2007 to 2009, is in the interval 0.019 to 0.101. The value zero is nowhere in this interval. Therefore we have convincing statistical evidence at 95% confidence that p2 – p1 is not equal to zero and the proportion of U.S. adults who would have answered that television was an effective way to promote a new product did change from 2007 to 2008.

Again, I actually think it should be reasonable for students to skip some of the things I included : perhaps not show the entire calculation in the Calculate step, though I’d have to hash out what to leave out, and my conditions check is definitely over-thorough.

I will 100% swear with all of my statistical knowledge that this is a very, very solid answer to the question that shows a deep understanding (I hope!) of underlying statistical concepts and also makes it QUITE clear that we have really good statistical evidence to support the claim and I think make it all clear to the reader. We have built up the fact that confidence intervals and hypothesis tests are both perfectly good ways to get from knowledge about a sample to knowledge about a population and draw statistically reasonable conclusions.

However, in 2012 they literally did not consider this idea or at least they didn’t consider it in the formal Scoring Guidelines they published.

Here are some highlights from the rubric (not all direct quotes) with my annoyed commentary in purple like my skin turns when I read it.

Intent of Question The primary goal of this question was to assess student’s ability to identify, set up, perform, and interpret the results of an appropriate hypothesis test to address a particular question.

OH IT WAS, WAS IT? Then why isn’t that what the question actually asks? I don’t see the word “hypothesis test” in the question, guys.

Sample Solution: **Is a hypothesis test**

Okay I’m not actually mad about this. The sample solution is just one sample after all.

Step 1: To get full credit, must “identify correct parameters AND both hypotheses are labeled and state the correct relationship between the parameters.

This is the first place I think I might lose credit. I identified the parameters and the inference procedure I was doing (which this rubric doesn’t explicitly require but some do). But I didn’t identify “both hypotheses.” Why? Because I’m not using a hypothesis test! There is no such thing as a null hypothesis in a confidence interval, because we are NOT assuming anything (the null hypothesis) is true. If I wrote a null hypothesis, I would be lying, because I have no intention of treating it as hypothetically given! All I wrote was the alternative hypothesis, but I didn’t call it that because that is a ridiculous name for something where there is no null. So it’s just “the claim I am evaluating for reasonability.” But would I get credit? The rubric says nothing, which I think means “No” and that is discriminatory against CI’s. There is no reason to state hypotheses to evaluate a claim with a CI.

Step 2: *Is fine because conditions are identical*

Step 3: To get full credit, students must calculate the test statistic and the p-value consistent with the stated alternative.

I don’t even begin to know how this would get translated to credit for a confidenct interval version of this solution. I’d have to scroll through other rubrics to try to see what they’ve done in situations where they specifically requested a confidence interval. Full credit? WHO KNOWS? (Probably, since I did the math right, but probably not if I only reported what the calculator said, even though reporting z and p directly from the calculator screen with no additional work or evidence of understanding is enough for hypothesis test users to get full credit.)

Step 4: To get full credit, most provide correct decision in context, also providing justification based on linkage between p-value and conclusion.

No p-value! Will my thorough explanation of how I didn’t capture 0 earn full credit? PROBABLY BUT I DON’T KNOW.

Okay, so some of my annoyance is that the rubric doesn’t even consider the possibility that students did it this way. Does that mean that it wouldn’t have been considered at the reading? Probably not. Lots of smart motivated people there, and I’m sure the answer would get elevated to high levels and would do well. But the rubric, which I encourage my students to read to understand the test, doesn’t mention the existence of confidence intervals AT ALL. That is discriminatory. Because CI’s are cool and a perfectly useful tool to answer this kind of question.

And if I can get full credit on the Calculate section for mindlessly writing down the values from the calculator, but can’t do the same for a confidence interval? That’s really discriminatory.

And if I would actually lose points for not writing things in terms of H0 and Ha? Then in that case, it’s not only discriminatory, it’s actively bad, because H0 is meaningless for confidence intervals. It’s like translating from English to German back to English to do that.

And if I somehow have to mention alpha or significance level to get full credit? See above. Confidence level should be enough. The problem didn’t require a specific alpha.

LOTS of AP problems avoid this problem by specifying the significance level (which heavily pushes a hypothesis test by its sheer existence), and I have seen one or two in this style that mention “A confidence interval is acceptable as long as alpha is correctly handled” , but the rubric’s guidance on those are thin, which means I can’t easily, as a teacher, help students figure out what they need to do for full credit. (and the most recent problem in this style, 2015 #4, has no such caveat in its published rubric, so…?)

In the end, this pushes me to tell them to go ahead and do hypothesis tests, so we can guarantee their 4 on this, the one semi-predictable AP problem. And that makes me sad, because tackling this problem from a pure CI perspective is just so, so pretty. And easier for many of them to wrap their heads around than the hypothesis test concept. Of course they need to know the H-test concept. But should we really encourage it as the one-and-only-way to find good evidence that something is true? Isn’t that the problem so many scientific journals are having?

One thought on “Does the AP Stats exam discriminate against confidence intervals?”